|

In OpenStack Kolla, they use docker for their services. Recently, I was alerted that I was running out of disk space and cleared out some unused kernels, but also realized, that I had 12Gb of docker logs as shown below. So I wanted to find an elegant solution to free up space for the long term.

# du -ch /var/lib/docker/containers/*/*-json.log 8.0K /var/lib/docker/containers/0244f6373b66bb31dc959e14ec7bb00aeb59d1b49341490acc6bb4f09a5d402a/0244f6373b66bb31dc959e14ec7bb00aeb59d1b49341490acc6bb4f09a5d402a-json.log 80K /var/lib/docker/containers/03298b99be331d066481badb01d0d21398525661edf7ab7b06218ba5abc5b25f/03298b99be331d066481badb01d0d21398525661edf7ab7b06218ba5abc5b25f-json.log 70M /var/lib/docker/containers/07ca03e21b0771149a8367fc9fcce02fd9049fd5f82c8e3f1c3414283868b84d/07ca03e21b0771149a8367fc9fcce02fd9049fd5f82c8e3f1c3414283868b84d-json.log 1.1M /var/lib/docker/containers/263dfc25ff10994ea39f8c7759da032dda828a7fda70e70333fe95bb84b4737a/263dfc25ff10994ea39f8c7759da032dda828a7fda70e70333fe95bb84b4737a-json.log 1.5G /var/lib/docker/containers/28ef28add064fd0c86a115b2a1e39e29c22aaff55dd3fa686b5c5737e1dcfd1f/28ef28add064fd0c86a115b2a1e39e29c22aaff55dd3fa686b5c5737e1dcfd1f-json.log 5.7M /var/lib/docker/containers/2a63fa0aeacc83272012b8a6a4faea3a61c725b0f343d7478dc5db5a88090668/2a63fa0aeacc83272012b8a6a4faea3a61c725b0f343d7478dc5db5a88090668-json.log 28K /var/lib/docker/containers/37288a8131e2c12498e90421dd84e24774138bc1dc3c0940589cb2def21ec507/37288a8131e2c12498e90421dd84e24774138bc1dc3c0940589cb2def21ec507-json.log 1.2M /var/lib/docker/containers/3a9e2f81083e882b1aebbbd9776ee270aafea8ff3a4fff1a546d1dbf09d84058/3a9e2f81083e882b1aebbbd9776ee270aafea8ff3a4fff1a546d1dbf09d84058-json.log 23M /var/lib/docker/containers/3e3fbc9ac409567e9a393d5b634c05168515a0fd05cdcd59dce5c29f840d9365/3e3fbc9ac409567e9a393d5b634c05168515a0fd05cdcd59dce5c29f840d9365-json.log 5.2G /var/lib/docker/containers/40f7a732c894e11c820a657621127e1a6aa80ec78d3c2d24e912e808ac9f7246/40f7a732c894e11c820a657621127e1a6aa80ec78d3c2d24e912e808ac9f7246-json.log 32K /var/lib/docker/containers/4974c9676191d92883944096d510923f8436964d4a49b611285ebc123fd32415/4974c9676191d92883944096d510923f8436964d4a49b611285ebc123fd32415-json.log 240K /var/lib/docker/containers/4c89ee12ac2df14577a86cc1c7f2e520836b7cc993d2b04a9693e3fc1f20540c/4c89ee12ac2df14577a86cc1c7f2e520836b7cc993d2b04a9693e3fc1f20540c-json.log 8.0M /var/lib/docker/containers/5032e981a2a20b44b0d0bc7d40f1b86bd41e57eab8e1690fce3b5833b684c254/5032e981a2a20b44b0d0bc7d40f1b86bd41e57eab8e1690fce3b5833b684c254-json.log 28K /var/lib/docker/containers/50afdd850d3f930fd31e8d6a0a993439ee2a6f7961c3e4390dea86671df20833/50afdd850d3f930fd31e8d6a0a993439ee2a6f7961c3e4390dea86671df20833-json.log 76K /var/lib/docker/containers/5404b2699e5732771d2bc75b138c2a46424678221004e35dd1e26505e2739d57/5404b2699e5732771d2bc75b138c2a46424678221004e35dd1e26505e2739d57-json.log 12M /var/lib/docker/containers/57086d7387b02a320cc89aa67e267e7c5a0f74be281ead6967e59a8b965ba52f/57086d7387b02a320cc89aa67e267e7c5a0f74be281ead6967e59a8b965ba52f-json.log 16K /var/lib/docker/containers/74961d08f8c33915d88c92014fcbcb433a4dc64a29bd582cbd9d90952613b46d/74961d08f8c33915d88c92014fcbcb433a4dc64a29bd582cbd9d90952613b46d-json.log 8.0K /var/lib/docker/containers/74c6befb80aac9631959716b4a31fd5ab12177f38a22753948532b5345b82801/74c6befb80aac9631959716b4a31fd5ab12177f38a22753948532b5345b82801-json.log 653M /var/lib/docker/containers/7780889aafd4cf17ceed613088684b0d763040f79c3e8a7aa09bbcb16bca456b/7780889aafd4cf17ceed613088684b0d763040f79c3e8a7aa09bbcb16bca456b-json.log 400K /var/lib/docker/containers/7a6429feea40206c9749714311759b728e7bb8a93783ad2b4f551e2d0880541c/7a6429feea40206c9749714311759b728e7bb8a93783ad2b4f551e2d0880541c-json.log 1.5G /var/lib/docker/containers/7f99dcab576b332336725b291c9b313b09515e31fb0b6501b42c8dd8f82d235e/7f99dcab576b332336725b291c9b313b09515e31fb0b6501b42c8dd8f82d235e-json.log 17M /var/lib/docker/containers/858ed8872a4d0006f5c88ee3de02670c80ff59e7db22506dd0617e8b71f2c42d/858ed8872a4d0006f5c88ee3de02670c80ff59e7db22506dd0617e8b71f2c42d-json.log 1.1M /var/lib/docker/containers/87133b4de74f382bc5f66774f0734e7b333d010c021e8f6b75d81fc847d50e2d/87133b4de74f382bc5f66774f0734e7b333d010c021e8f6b75d81fc847d50e2d-json.log 24K /var/lib/docker/containers/974f49af9963cf24a6a488e5c0a7f969808355cbfc25b42ac41531db2023202a/974f49af9963cf24a6a488e5c0a7f969808355cbfc25b42ac41531db2023202a-json.log 16K /var/lib/docker/containers/99eb06c3bc25697bab223375e0065709dca21133448642574b69a4773f8f9c5d/99eb06c3bc25697bab223375e0065709dca21133448642574b69a4773f8f9c5d-json.log 12K /var/lib/docker/containers/9a02926e775da34f0098babc84943342e2aaaedaa56caa3aeb5233e71b797a38/9a02926e775da34f0098babc84943342e2aaaedaa56caa3aeb5233e71b797a38-json.log 236K /var/lib/docker/containers/9e2303a0dd36170b53a146e4326ef070216533085b67143e07e6fc82e509f954/9e2303a0dd36170b53a146e4326ef070216533085b67143e07e6fc82e509f954-json.log 56K /var/lib/docker/containers/a144afb0b5530c2ebbb9a4b669c3e160e9b1adeef12fdd154689612ca5d3cd05/a144afb0b5530c2ebbb9a4b669c3e160e9b1adeef12fdd154689612ca5d3cd05-json.log 664M /var/lib/docker/containers/abce7b945a9fb94bc148ba2f53255f3300f97996fbef7373aee8b5fa4394f633/abce7b945a9fb94bc148ba2f53255f3300f97996fbef7373aee8b5fa4394f633-json.log 32K /var/lib/docker/containers/c0a67ef24ea31d5bd98896bb2ea2bd13683ac03a7705803aeefec993f4df6278/c0a67ef24ea31d5bd98896bb2ea2bd13683ac03a7705803aeefec993f4df6278-json.log 1.6G /var/lib/docker/containers/c0a711c5b59baf775e47d230f8713308ad1c8fd3421c4d18b2bc8bff1f67f36e/c0a711c5b59baf775e47d230f8713308ad1c8fd3421c4d18b2bc8bff1f67f36e-json.log 376K /var/lib/docker/containers/c0b54e1439d9094c6c1caad7cdaa4a777e26107eb53ac11a13697e46c2090757/c0b54e1439d9094c6c1caad7cdaa4a777e26107eb53ac11a13697e46c2090757-json.log 23M /var/lib/docker/containers/caacff44c1a32c44b5ae662a011f8755374df085bc285f24a268fb6b0f33742e/caacff44c1a32c44b5ae662a011f8755374df085bc285f24a268fb6b0f33742e-json.log 36K /var/lib/docker/containers/cd683ed0a3086d2736d46c2248fcf64f8c25a8153bd3024f5bc5caaaa3b2966c/cd683ed0a3086d2736d46c2248fcf64f8c25a8153bd3024f5bc5caaaa3b2966c-json.log 56K /var/lib/docker/containers/d9c03c2dd2f70f3cc345f2f8e35b2c215277b93148f13b72f9a970293d9afa63/d9c03c2dd2f70f3cc345f2f8e35b2c215277b93148f13b72f9a970293d9afa63-json.log 66M /var/lib/docker/containers/ddc02fe22c41b75240cf71bf07079834c1deea95063c935ba8eb287e67af791e/ddc02fe22c41b75240cf71bf07079834c1deea95063c935ba8eb287e67af791e-json.log 8.0K /var/lib/docker/containers/ddc9a874ee47275fe7d139d2ccbf173f2e16d091edd515278abb54398bc50912/ddc9a874ee47275fe7d139d2ccbf173f2e16d091edd515278abb54398bc50912-json.log 21M /var/lib/docker/containers/df13c5b52ec59a0bcce696c24236ac549bdde863a29d661b3ac398650447a64f/df13c5b52ec59a0bcce696c24236ac549bdde863a29d661b3ac398650447a64f-json.log 24K /var/lib/docker/containers/e04e35323cd7f64e9a6dccb5c420f72e7800963fbb233b0790314209072ede2b/e04e35323cd7f64e9a6dccb5c420f72e7800963fbb233b0790314209072ede2b-json.log 53M /var/lib/docker/containers/e396fcc6e624dd0fafcc35823b9e1133031bf64d1fd1bc7409916dc405fa4b03/e396fcc6e624dd0fafcc35823b9e1133031bf64d1fd1bc7409916dc405fa4b03-json.log 548K /var/lib/docker/containers/f58b6542f00de95aa1395663e4fd813094da2d8f23f220109c2d263c6ab21933/f58b6542f00de95aa1395663e4fd813094da2d8f23f220109c2d263c6ab21933-json.log 12K /var/lib/docker/containers/f9a66b5458de3b4620eeb80ef5651c2d8ba5826edfdc6a07365711453f53da11/f9a66b5458de3b4620eeb80ef5651c2d8ba5826edfdc6a07365711453f53da11-json.log 4.0K /var/lib/docker/containers/fde7cadb362d7645a436290f97866a584a375eebac831d14690aded45a51805c/fde7cadb362d7645a436290f97866a584a375eebac831d14690aded45a51805c-json.log 12G total

My solution was to create a file here: /etc/logrotate.d/docker-logs with the following content.

/var/lib/docker/containers/*/*.log { rotate 7 daily compress size=50M missingok delaycompress copytruncate }

Once written, I force ran my logrotate:

logrotate --force /etc/logrotate.d/docker-logs It took a couple of minutes and the size was down to 4.0K and hopefully wont get to the GB range again. Resources: https://blog.birkhoff.me/devops-truncate-docker-container-logs-periodically-to-free-up-server-disk-space/ Note: I found much of this through various blogs and common knowledge, but I came across this blog and he had a similar issue and ended up being very similar. So the meat of the content is the same, but this is written by me. Setting up a new wifi router can be a relative quick and easy process. Here's my personal checklist of items that I try to do. Essential Quick To-Do's:

Encryption, Ranked from best to worst:

Optional Advanced Stuff:

Current Setup:

MacOS 10.15 (Catalina) Java SE Development Kit 13.0.1

My frustration began when I am paying for gigabit fiber and only getting a few hundred MB/s downloads. So here is a guide I used to help speed up my wifi with my new Ubiquiti nanoHD Access Point. First, remember, your Mbps connection is, in reality, is 8x less than that once you convert it to the more familiar, MB/s . 1000 Mbps = 125 MB/s 500 Mbps = 62.5 MB/s 100 Mbps = 12.5 MB/s 50 Mbps = 6.25 MB/s 10 Mbps = 1.25 MB/s Don't be too disheartened though about speeds, Netflix recommends 5 Mbps for HD, 25 Mbps for UHD, so you'll be okay. Hardware:

2.4 GHz:

5 GHz:

Caution:

Testing:

Resources: https://help.ubnt.com/hc/en-us/articles/360012947634-UniFi-Troubleshooting-Slow-Wi-Fi-Speeds- https://help.ubnt.com/hc/en-us/articles/115011813968-UniFi-AirTime-What-s-Eating-your-Wi-Fi-Performance- Setup:

Goal:

Issues:

Migrating VM's from 2012R2 to 2016: 1. Open Powershell in Windows 2016, and create root certificate: PS> New-SelfSignedCertificate -Type "Custom" -KeyExportPolicy "Exportable" -Subject "CN=<<WINDOWS 2016 LOCALHOST SERVER NAME>>" -CertStoreLocation "Cert:\LocalMachine\My" -KeySpec "Signature" -KeyUsage "CertSign"

Now that you've deployed OpenStack from my previous post, it's time to configure OpenStack so that we can use it. This will be a short post showing you the commands necessary to create a functional external network for VM's to reach, along with users, local networking, updating security groups, creating flavors and images. It sounds like a lot, but it's easy to do with the CLI.

#################### # VIRTUAL INTERFACES #################### auto eno1 auto eno1:1 iface eno1:1 inet static address 192.168.6.31 netmask 255.255.255.0 auto eno1:2 iface eno1:2 inet static name exteno address 10.245.126.5 netmask 255.255.255.0 gateway 10.245.126.253 dns-nameservers 10.245.0.10 mtu 1500 ######################### # END VIRTUAL INTERFACES #########################

Let's begin....

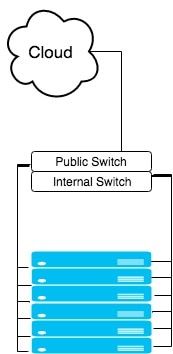

Node setup: First on all of your controllers, we will need to install bridge-utils and update the /etc/network/interfaces file. In the file below my eno1 is internal and my eno2 is public facing. I converted my eno2 into a bridge:

A few ansible settings that I like to have to help speed up the fun....

[defaults] host_key_checking=False pipelining=True forks=100 gathering=smart

host_key_checking: disables host key checking. This will stop the annoying: WARNING: REMOTE HOST IDENTIFICATION HAS CHANGED! (On by default since V1.3)

pipelining: this will help speed up ansible significantly by reducing the ssh connections. forks: This increases the number of parallel ssh connections you can have. (BY default this is "A very very conservative number since V1.3") gathering: Speeds up fact checking by cacheing the results (New since V1.6) These settings can be set in any of the following (V1.5+): * ANSIBLE_CONFIG (an environment variable) * ansible.cfg (in the current directory) * .ansible.cfg (in the home directory) * /etc/ansible/ansible.cfg

But I usually just set it in my home directory:

~/.ansible.cfg Resources: https://docs.ansible.com/ansible/intro_configuration.html If you have Ubuntu MAAS (Metal As A Service) and, like most of us, have a hard drive configuration that is over 2 TB, you will run into trouble deploying. At least that was the case for me. In our server room we have Dell R710's with 12TB's of storage (6, 2TB drives). For these systems, I have them configured in RAID 6 giving us 8TB of space. However, any time I deployed, it would fail. But there was never a problem with our R410's that have only 900GB of space. Also, I know that a 8TB deployment was possible, since we've been doing it with FUEL since Ubuntu 12. So what gives? Was there some sort of limitation mentioned that we overlooked? Not according to the troubleshooting guide [5].

What was/is the problem? Our Environment: We have:

Error message: Error: attempt to read or write outside of disk `hd0'. Entering rescue mode... grub rescue> Attempts: Fails:

Success!

Conclusion: I do not know why the larger (1GB) boot directory was critical but it has worked across all of our 710's while the others have failed. I hope this helps someone. I got the idea of the separate partitions from resource 3 below. Hopefully as MAAS matures more, this bug will work itself out, however, it has been noted since 2014, which is a bit concerning [4]. Resources: [1] . http://en.community.dell.com/techcenter/extras/w/wiki/2837.hdd-support-for-2-5tb-3tb-drives-and-beyond [2] . https://askubuntu.com/questions/495994/what-filesystem-should-boot-be [3] . https://askubuntu.com/questions/470823/ubuntu-14-04-lts-maas-boot-fails-on-fresh-install-on-a-dell-2950 [4] . https://bugs.launchpad.net/ubuntu/+source/ubiquity/+bug/1284196 [5] . https://docs.ubuntu.com/maas/2.1/en/troubleshoot-faq

Quick way to get the inventory of you host and write it to a file:

root@r6-410-1:/home/ubuntu# ansible all -i INVENTORY_FILE -m setup --tree /tmp/facts

This will print the inventory into terminal and write it into a file with the name of the file being the name of the machine listed in your inventory file. This could be either a FQDN or IP address. The file will be in JSON format as well for easy viewing. Enjoy!

|

AuthorJames Benson is an IT professional. Archives

August 2022

Categories

All

|

RSS Feed

RSS Feed